OpenAI, for its part, has contended that training an AI system falls under fair use protections, especially given the extent to which AI transforms the underlying training data into something new.

A spokesman for OpenAI said the firm respects authors’ rights and believes they should “benefit from AI technology”.

“We’re having productive conversations with many creators around the world, including the Authors Guild, and have been working cooperatively to understand and discuss their concerns about AI,” the spokesman said of America’s oldest and largest organisation for published writers.

“We’re optimistic we will continue to find mutually beneficial ways to work together to help people utilise new technology in a rich content ecosystem.”

Everybody’s realising to what extent their data, their information, their creativity, has been absorbed

Nevertheless, the publishing industry is pushing back as it reckons with a software boom that’s given anyone with Wi-fi the power to automatically generate large reams of text.

In addition to Preston’s suit, various other groups of authors are pursuing their own proposed class action suits against OpenAI.

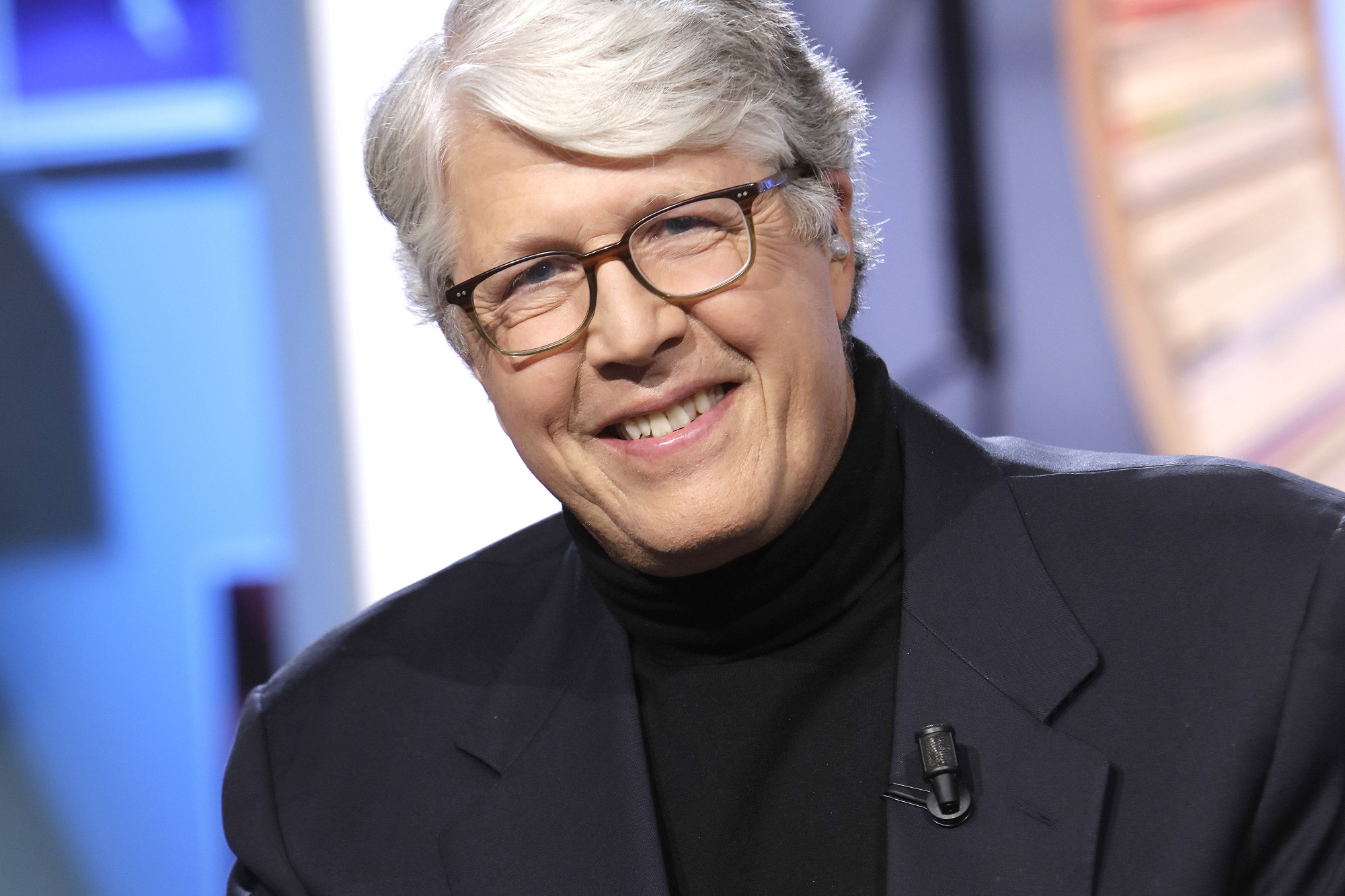

“Everybody’s realising to what extent their data, their information, their creativity, has been absorbed,” says Ed Nawotka, an editor at American trade news publication Publishers Weekly. There is, in the industry, a degree of “abject panic”, he says.

Knock at the Cabin: M. Night Shyamalan’s thought-provoking thriller

Knock at the Cabin: M. Night Shyamalan’s thought-provoking thriller

A different suit recently found Paul Tremblay (The Cabin at the End of the World) and Mona Awad (Bunny) suing OpenAI for copyright violations – the company is trying to get that one mostly dismissed too – while Michael Chabon (The Yiddish Policemen’s Union) is a plaintiff in two additional legal actions that are targeting OpenAI and Meta, respectively.

And in July, the Authors Guild – a professional trade group, not a labour union – sent several technology companies an open letter calling for consent, credit and fair compensation when writers’ works are used to train AI models.

We’re not opposed at all to AI. It’s not a zero-sum game

The lawsuit in which Preston is involved, which features 17 other named plaintiffs including the Authors Guild, claims that OpenAI copied the authors’ works “without permission or consideration” to train AI programs that now compete with those authors for readers’ time and money.

The suit also takes issue with ChatGPT’s generation of derivative works, or “material that is based on, mimics, summarises, or paraphrases [the] Plaintiffs’ works, and harms the market for them”.

The plaintiffs are seeking damages for their lost licensing opportunities and “market usurpation”, as well as an injunction against future such practices, on behalf of American fiction authors whose copyrighted works were used to train OpenAI software.

“They didn’t ask our permission, and they aren’t compensating us,” Preston says of OpenAI. “What they’ve done is created a very valuable commercial product which can reproduce our voices. … It’s basically theft of our creative work on a grand scale.”

Since the plaintiffs’ books aren’t freely available on the open web, he adds, OpenAI “almost certainly” accessed them via alleged piracy sites such as the file-sharing platform LibGen.

OpenAI declines to answer a question about whether the plaintiffs’ books were part of ChatGPT’s training data or accessed via file-sharing sites such as LibGen. In a statement to the US Patent and Trademark Office cited in the Authors Guild suit, OpenAI stated that modern AI systems are sometimes trained on publicly available data sets that include copyrighted works.

These characters belong to us. They come out of our heads

Connelly never got to decide whether his books would be used to train an AI, he said, but if he’d been asked – even if there were money on the table – he would probably have opted out.

The idea of ChatGPT writing an unofficial Bosch sequel strikes him as a violation; even when Amazon adapted the series into a TV show, he says, he had some control over the scripts and casting.

“These characters belong to us,” Connelly says. “They come out of our heads. I even put stuff in my will about [how] no other author can carry the Harry Bosch torch after I’m gone. He’s mine, and I don’t want anyone else telling his story. I certainly don’t want a machine telling it.”

But whether the law will allow the machines to do so is a different question.

The various lawsuits against OpenAI allege copyright violations. But copyright law – and especially fair use, the area of law governing when copyrighted work can be incorporated into other endeavours, such as for the sake of education or criticism – still doesn’t offer a cut-and-dried answer to how these lawsuits will shake out.

“We’ve got kind of a push and pull right now in the case law,” says intellectual property lawyer Lance Koonce, a partner at the law firm Klaris, pointing to two recent US Supreme Court cases that offer competing models of fair use.

“These AI cases – and especially the Authors Guild case [against OpenAI] – fall into that tension,” Koonce said.

In its patent office statement, OpenAI argued that training artificial intelligence software on copyrighted works “should not, by itself, harm the market for or value of copyrighted works” because the works are being consumed by software rather than real people.

Outside of legal avenues, stakeholders are already pitching solutions to this tension.

Suman Kanuganti, the chief executive of AI messaging platform Personal.ai, says the tech industry will probably adopt some sort of attribution standard that allows people who contribute to an AI’s training data to be identified and compensated.

“Once you build the models with known, authenticated data units, then technologically, it’s not a challenge,” Kanuganti says. “And once you solve that problem … the economic association then becomes easier.”

Preston, the adventure novelist, agrees that there may yet be a path forward.

Licensing books to software developers through a centralised clearing house could provide authors with a new income stream while also securing high-quality training data for AI companies, he says, adding that the Authors Guild tried to set up such an arrangement with OpenAI at one point but that the two sides were unable to reach an agreement.

“We were trying to get them to sit down with us in good faith; we’re not opposed at all to AI,” Preston says. “It’s not a zero-sum game.”

Stay connected with us on social media platform for instant update click here to join our Twitter, & Facebook

We are now on Telegram. Click here to join our channel (@TechiUpdate) and stay updated with the latest Technology headlines.

For all the latest Art-Culture News Click Here